Reproducibly producing data from workflows, pipelines and coupled models

Posted on 19 June 2019

Reproducibly producing data from workflows, pipelines and coupled models

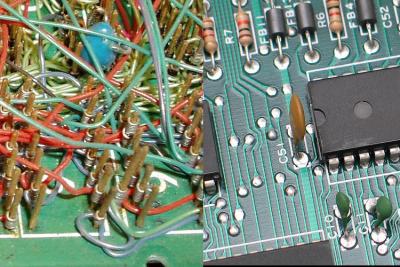

Image courtesy of Wikinaut and Christian Taube

Image courtesy of Wikinaut and Christian TaubeBy Sarah Gibson, Anna Krystalli, Arshad Emmambux, Alexandra Simperler, Tom Russell, and Doug Lowe

This post is part of the CW19 speed blog posts series.

Like history, reproducible data processing is just one, um, thing after another. When the number of tools, models or steps in a process grows beyond a handful, we start to feel the need for some automation or structure. Running the same sequence of tools over multiple data? Capturing the steps of an analysis for collaborators or students to repeat, modify and extend? Conducting a scenario analysis using coupled models? Workflow, pipeline and model coupling tools all respond to the need for reliable, reproducible analysis.

The two terms, workflow and pipeline, can often be used interchangeably. We’ll take pipeline to mean an automated process: a sequence of steps in which each step is a program (model, script, tool) that takes an input and produces an output. A workflow describes the whole process from end to end. It might include both manual actions or inputs and possibly multiple automated pipelines.

Many systems exist to specify and orchestrate research pipelines, often expanding on functional but perhaps ad-hoc approaches. Software tools, conventions and standards all help to define, run and reproduce workflows and pipelines, focussing on different parts of the problem: reproducible computing platforms and execution environments; metadata about the steps that need to be run and the data or files that are exchanged between steps; and efficiently running or scheduling the execution of each step.

It’s typically worth starting with the simplest thing that could possibly work. Bash scripts are a very good way of glueing together scientific models and data at a small scale, and Makefiles can efficiently capture dependencies between steps in a pipeline (‘Why Use Make’ blog post).

When things start to increase in problem space (such as number of models or datasets), scalability issues begin to manifest. Bash scripts at scale can become difficult to maintain, particularly nested Bash scripts orchestrating other Bash scripts, where technical debt can accelerate and error message escalation can become problematic. Solving these problems takes time, but often refactoring code within an academic environment is not given sufficient priority or even recognition if the current system appears to work.

Reproducibility is a critical issue with workflows and pipelines. Tools with graphical user interfaces, whilst more accessible from a usability perspective, sometimes suffer from reproducibility issues when others attempt to build the model from scratch. Generally when designing workflows, much attention is given to data and the software that runs it, whereas runtime parameters and other configuration gets short shrift. Such configuration needs to be captured at a number of levels in order for the whole setup to be truly reproducible.

Another overarching problem with reproducibility approaches is transparency - it's often difficult to see and understand what is happening at any particular level of the pipeline (for both the workflow creator and others when the workflow is shared). This increases the chances for errors to creep into the workflows leading to erroneous results. Many GUI approaches go a long way to ameliorating this issue, but often what is needed is a way to 'gate' critical points in the pipeline for a trained researcher to eyeball the situation and ensure it is proceeding as expected. If unexpected behaviour is not caught at the right time, too much automation can lead to expensive (in terms of time and cost) and flawed use of computational and data resources.

There are various tools which aim to help capture workflows and computational environments. For Python, Sumatra captures runtime configurations for scientific computations and tags results with information regarding the inputs and models used to produce them. ReciPy creates a log the provenance of the code and its outputs and provides a GUI. For the R community, drake can create Makefile style commands which only execute the sections of a codebase which have been updated. Drake has in-built parallelisation and also provides a GUI.

Container orchestration tools (argo) and continuous integration pipelines (Travis, Jenkins) take care of specifying computational environments.

The problem of matching outputs from one component of a workflow to inputs for the next component can be helped by standards for data definitions (QUDT, CSDMS standard names) and standards for file formats (OGC, arrow and Apache ecosystem formats, frictionless data packages).

Tools which focus on coupling models (OASIS, OpenMI, BMI, smif) define and use interfaces for data exchange - either leaning on language interoperability (foreign function interfaces), inter-process/networked communication, or access to a shared data store (filesystem, database, blob storage).

Tools for defining and executing pipelines (CWL implementations, cylc, luigi, Pegasus, Taverna) may conceive of the pipeline as directed acyclic graph of tasks, then focus on scheduling, sometimes working against HPC schedulers (PBS, Slurm).

It seems inevitable in the future that we will require workflow management to become part of research culture. Automation can be very exciting once attempted, even for smaller projects, but can seem intimidating in the beginning. While many tools are making strides towards simplifying and making setting up pipelines more user friendly, they still require motivation to set up. Indeed, in some cases it might be overkill and pushing too much onto researchers might be counterproductive. One way to instill some of these practices is to maintain pipelines and workflows at a lab level. This allows code worth investing in to be selected (helps with justifying spending time and money on developing the code) and new members can be introduced to the concepts through working on the shared code for shared benefit.