The first Science Paper hackathon: how did it go?

Posted on 13 November 2014

The first Science Paper hackathon: how did it go?

By Derek Groen, Research Associate at University College London.

By Derek Groen, Research Associate at University College London.

This September, Joanna Lewis and I organised a Paper Hackathon event in Flore, Northamptonshire, with support from both the Software Sustainability Institute and 2020 Science.

Our highly ambitious goal was to write a scientific draft paper over the course of two and a half days within a highly informal setting. Did we manage to accomplish that? In many of the projects we did!

The projects

In the end we ended up accepting five proposed Hackathon projects (see the Appendix for their original descriptions) and rejecting two projects. Two weeks before the event, we already distributed the 21 participants among the five project teams, which were:

- Project #1 - A software reproducibility investigation. Led by Joanna Lewis, proposed by Jonathan Cooper.

- Project #2 - A comparison of code development approaches and techniques in academia. Led by James Osborne and Derek Groen, proposed by James Osborne.

- Project #3 - A study of animal/cell dispersal. Led and proposed by Maria Bruna.

- Project #4 - A protocol paper on Bayesian inference of animal receptor models. Led and proposed by Ben Calderhead and Zhuoyi Song.

- Project #5 – A lattice-Boltzmann solver written in JULIA. Led by Mayeul D'Avezac, proposed by James Hetherington.

The event - Wednesday

The Paper Hackathon kicked off with a lunch, followed by several short presentations about the Hackathon, the Institute, 2020 Science and the five selected projects. We formed the groups in advance, so we could split up in groups and get started on the projects. Many of the groups worked during daytime as well as in the evenings, enjoying a traditional pizza-based dinner in between. As some participants worked until quite late we decided not to do an organised evening social activity, although many participants ended up taking a swim later in the evening (croquet was up for grabs too, but somehow that set remained unused throughout the event...).

The event - Thursday

On Thursday we started with a quick breakfast followed by more paperhacking. We asked each project to give a progress update around in the afternoon. During this time, we could see whether all the projects were progressing nicely, but it also gave a good opportunity for others to chip in extra inspiration to further enhance each project. In the evening we went for a barbeque dinner, which must have been a slight torture for the week-long fasting on-site landlord, and opted for a freeform evening schedule after that. Many of us kept working until 8.00 or 9.00 in the evening (and team JULIA went until the wee hours), and then participated in a pub quiz, with a small group finishing the night with some in-pool volleyball.

The event – Friday

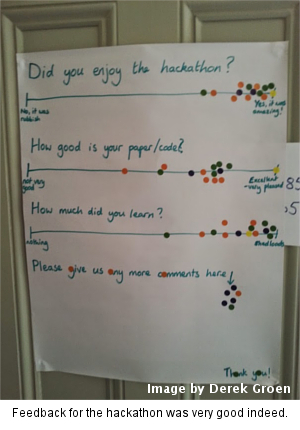

After some last-ditch efforts we performed peer review on all the Hackathon projects. Naturally few journals actually have a review turnaround time of less than 24 hours, so we opted to have some of the participants act as reviewers instead. The reviewers were positive all over the board, provided suggestions for further enhancements and polishing after the Hackathon.

And, to close it all we made a group picture (of course team JULIA was missing again, still working)!

And now?

Well, the Paper Hackathon resulted in no less than five paper drafts (one tallying up to 24 pages), two software tools and one cloud-based toolkit. Several of the projects are keen for a follow-up event to add more polish to their projects, while others have already organised follow-ups themselves!

Of course, fully-featured and fully-polished journal papers are seldom written overnight, so I believe it's only fair to give the participants some time to put the final touch on their projects. However, in a couple of months I certainly do aim to give you all a brief update!

Appendix – summary of the accepted projects

Appendix – summary of the accepted projects

1. A software reproducibility investigation where we aim to go far beyond just replicating existing studies, to be able to reproduce the key features/results of experiments, and extend them to look at related scenarios or new questions. We ask each participant to bring a case study, along with some thoughts on what it would take (or has already taken) to make it usefully reproducible. What could lead to errors in your papers, how costly could each be, how do they influence each other, how could you guard against each, and what are the costs of doing so?

2. A comparison of code development approaches and techniques, with the aim of assessing the benefits of formal development techniques, and how they should be modified to better suit academia. We are looking for participants who are involved in collaborative code development and are willing to share their experiences, or with knowledge about software engineering.

3. A complete study of animal/cell dispersal, where we will aim to put together the different stages involved in studying a specific system of interacting agents (from getting the individual paths from a experimental data set, inferring the individual-based model and deriving a continuum model for the population density). Crucially, we will try to close the loop and see if the resulting model can be used to predict the experimental data, which is something lacking in most existing studies. We will be looking at people with expertise in any of these study stages (tracking data acquisition, stochastic modelling, model inference) and/or with an experimental data set to test.

4. A protocol paper on Bayesian inference of animal receptor models, which will allow researchers to estimate an important new parameter (namely the refractory period distribution) in a robust and reproducible manner. We are looking for participants with expertise in webpage based cloud computing interfaces, general user interfaces and software automation, Markov-chain Monte Carlo techniques and data visualization.

5. A paper describing the development experience of writing a lattice-Boltzmann code for the first time in a new programming language (JULIA). We are looking for participants with some knowledge of lattice-Boltzmann, or who are keen to develop using the JULIA programming language.