Best coding practices in Neuroscience

Posted on 8 June 2017

Best coding practices in Neuroscience

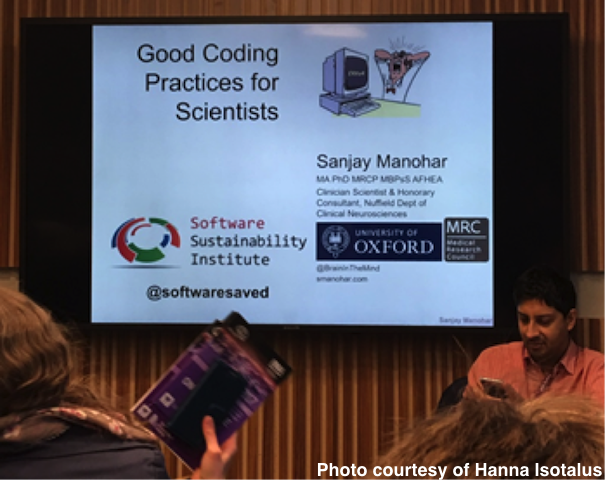

By Sanjay Manohar, University of Oxford, and Software Sustainability Institute fellow.

By Sanjay Manohar, University of Oxford, and Software Sustainability Institute fellow.

We have a problem in neuroscience. The complexity of data analysis techniques increases every year. With each increase in complexity comes an increase in the possibility of error. We have already been plagued by such problems, which have been reported on the internet and in the news. With increasing quantities of code being written, these problems seem unlikely to subside. How can we address the root of the problem?

In a questionnaire I regularly give to my students, one recurrent and ominous statistic always emerges: neuroscientists are, by and large, taught by each other to code. A PhD student is taught by his postdoc, who is in turn guided by her PI, who herself learned to program on the job with help from colleagues. Nobody in the loop has been formally taught coding practices, or implements the kinds of guidelines or conventions that are commonly imposed when programming in industry. This, I believe, is a central part of the problem.

I see a great deal of code during my "code clinic". This is a weekly one-to-one session I have set up, where individuals in the Oxford Neuroscience department can book a half-hour slot with me to improve their code. Through this, I regularly review code being used in the department, advising on style, reliability, code management, data structuring and statistical modelling. During these clinics, I see a great deal of code. Sometimes it is joy, and sometimes it is pain. But pain makes you grow: you spot patterns of avoidable errors, and techniques to minimise them. This pain has been the primary motivation for my Good Coding Practice seminars. I boldly claim, in my seminar advert, "you will discover how to move from writing a series of one-use scripts to writing well-planned, transparent, reusable code.”

Of course, making the transition from scripting to reusable code is hard. I always receive violent objections when I berate the use of “clear all” and “del” to remove variables from the workspace. On 10th April, I ran the good coding practice seminar at the British Neuroscience Association Festival of Neuroscience, and that time was no exception! The gasps and gapes when I dropped the bombshell betrayed the disbelief in the room. Having perked people’s interest by challenging their most elementary coding practice, I attempt to give them the conceptual tools to understand the solution: the use of namespaces.

What are namespaces? This is a concept that few neuroscientists have come across, yet is intimately familiar to anybody who has written modular software. By walking through the stages of a function call and the creation of scope, I attempt to convince the audience that every use of "clear" should be neatly avoided. Once they better understand what I am trying to say, I introduce the doctrine of referential transparency.

Once neuroscientists begin to wrap up their code into neat bundles, defining their inputs and outputs clearly and documenting the requirements and error conditions, then we’ll see improvements in code reliability and reusability. Reusability means less code is rewritten from scratch—a massive and expensive problem today. The only way to promote code reuse is extensive documentation and testing of the library, and the very first stage of this is to package code correctly. Once this is established, we can talk about the contract and create an API.

So the dream is to break the vicious cycle of scientists teaching scientists, propagating those habits and error-prone practices that lead to code explosion, bugs and code decay. Was I successful this time? I don’t know. Only the next code clinic will evince that.