Mentorship programme: Scraping health-related articles and analysing trends

Posted on 14 September 2022

Mentorship programme: Scraping health-related articles and analysing trends

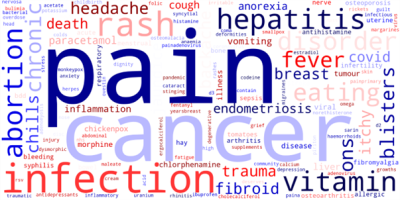

Fig 1. Wordcloud depicting most mentioned

Fig 1. Wordcloud depicting most mentionedhealth-related terms in sitemap 48 of BBC.com

By Arooj Hussain.

This blog post reflects on our Learning to Code mentorship programme as part of a Research Software Camp.

In May this year, I signed up for the Learning to Code Mentorship Programme organized by the Software Sustainability Institute, without much knowledge of where the journey would lead me. All I had in mind was that I needed to brush up on my Python skills by working on a small pilot project that would give me a head start for my Ph.D.

I was fortunate enough to be paired with Jay DesLauriers of the University of Westminster, whose patience and calm demeanor were as helpful as his interactive sessions and demos. The weekly one-on-one meetings served not only as a guide for the immediate task at hand but also gave me that deadline rush you have with real-world projects.

The project and the process

Jay and I agreed upon scraping the BBC website for health-related articles and analysing the trends as to which diseases/disorders were most discussed and hence, most prevalent lately; as well as what remedial measures/ medicines were most frequently mentioned aka prescribed. We called our Project “Named Entity Recognition of Drugs and Disorders from BBC Health Articles”.

We broke the project down into three parts - scraping the data, analysis and visualisation, which allowed me to learn different software packages and libraries in conjunction with plain Python. Since I had relatively little experience with web scraping, we started off with scraping and analysing just one article and then scaling it up to 50 articles from the latest sitemap using a Regular Expression based search. Playing with BeautifulSoup to extract relevant text from the articles was beautiful in itself and so was the step-by-step analysis using NLTK and then spaCy. Referring to a medical knowledge base viz. UMLS or MESH did give some hiccups to my laptop, but the journey of moving from one software package to the other, learning new things along the way, and understanding the suitability of each was a very useful experience.

We primarily worked in VS Code but shifted to Google Colab towards the end due to some irregular connectivity issues with the knowledge bases. Even though I generally prefer having the work I do right on my local machine and the ease that VS Code offers in creating environments using Conda as well as the one-time installations using pip are advantageous as compared to the non-permanent instances in Colab, I would still upvote Colab for the ease it provides in sharing and working from any machine.

In the final stages of the project, we decided to use Matplotlib to visualise our results in the form of a word cloud (see Fig. 1) as well as a basic word frequency plot. Even though the project is yet to be put on the shelf of closed and accomplished works, as we intend to take it a bit further by doing some more detailed analysis and visualisation, the current deliverable is a small something to be proud of to us, considering the evolution of the project as well as of the mentee.

I highly recommend these mentorship programmes to anyone who wants to take a deep dive into learning by doing. Whatever they are planning to work on, I'm sure the team at SSI will pair them with the most suitable mentor to help them achieve and even outperform their expectations.