The grand challenges of teaching coding to humanities students

Posted on 28 June 2017

The grand challenges of teaching coding to humanities students

By Iza Romanowska, University of Southampton, and Software Sustainability Institute fellow.

By Iza Romanowska, University of Southampton, and Software Sustainability Institute fellow.

It may be challenging to teach an old dog a new trick but to change him into a cat is a whole new level of difficulty. So when we embarked on an ambitious task to teach archaeologists to code a simulation, we knew we need to make an extra effort. How do you explain a while-loop to someone who has never seen a line of code? How do you discuss different testing paradigms when you know the main issue will be to get the code to run in the first place? How simple can you make a simulation without losing all of its functionality? These type of questions are best approached by diving into the deep end and running a training workshop on an unsuspecting sample of not-so-computationally-savvy-yet-quite-interested researchers. Here we report on an Software Sustainability Institute sponsored workshop and present a few lessons we have learnt on the way.

Archaeologists usually found knee-deep in mud or elbow-deep in medieval manuscripts are not known for their outstanding computational literacy. This translates into a limited use of many computational tools commonly employed in other disciplines. In particular, formal, computational modelling techniques, which require a high level of technical and mathematical skill such as simulation are severely underused in the discipline. Therefore the aim of our workshop was to train the next generation of researchers in software used in a particular simulation technique—agent-based modelling. Agent-based models employ individual software units - agents, which interact with each other and with their environment creating little virtual societies. These ‘artificial societies’ can be constructed in many different ways; they can be put in various types of environments and bugged with natural disasters without the prior approval of an ethic committee. In a nutshell, they provide a fantastic petri-dish style testing environment for the myriad of theories archaeologists come up with every day.

Since formal computational methods based on developing software are not commonly applied to archaeological research and are taught only by a handful of universities. Such events have a significant impact: first, because they open a new skill to people who otherwise have no means of attaining it, and second because it is relatively easy to convince a significant proportion of future practitioners to best practice in computational research following the Institute guidelines. The workshop was also followed by a traditional conference session on archaeological simulations and a roundtable discussing and the prospects of this technique in our discipline.

Delivering the tutorials, discussing examples of famous simulations and answering infinite questions of ‘why is my code not working’ have resulted in this short list of lessons learnt from the experience of teaching non-tech folk a demanding computational technique:

-

Sometimes it is worth sacrificing some of the right terms and conciseness.

This reminds me of my first day at my first coding course. The first words of the instructor were: “You can run Python scripts from the terminal”. It is not that I did not understand the words used—I went for a run every morning, had some (admittedly rather limited) knowledge of world reptiles, seen many medieval scripts and been to Heathrow. Yet put together they just did not make any sense (other than in a very Salvador Dali kind of way). Keep this in mind when introducing a new subject to the otherwise perfectly well-educated audience. -

Make it relevant, or, even better, make it familiar

When trying to master a completely new skill, the last thing one needs is to be additionally confused by an unfamiliar context used as an example. The content of what is being done (e.g., we are simulating a hunter-gatherer foraging) can significantly aid the understanding of how it is being done (e.g., we write this specific code to make him/her walk in a random fashion) or at least provide a good idea of why are we doing it in the first place (e.g., we want to understand the pattern of how s/he collects raw material). We could see that our students appreciated that we used simulation examples derived exclusively from archaeology or social sciences. -

Do not get swamped in details

We knew that sooner or later some of our students would come across the “Nothing named variable-name has been defined” error. It pops up all the time and, in 90% of cases, it means there is a typo in the code. Of course, sometimes the variable has actually not been defined because of one of a multitude of reasons but spending half an hour explaining each potential case is not feasible if you have a mere two days to get people up and running in building scientific simulations. -

Make it fun

Here the nature of agent-based modelling was squarely on our side - creating little humans wandering through the screen is like playing a computer game. And even if it is not exactly a rollercoaster ride making simple graphs or working together to solve a specific computational task can be made into a relatively entertaining activity with a little bit of creativity. -

Be positive and get people working together

People get frustrated easily. When you have been a successful academic for three decades but now cannot get the code to work correctly the common reaction is to drop the frustrating task and return to the one you are good at. This tendency is difficult to reverse but providing a lot of encouragement and a positive attitude can work wonders. Similarly, making people work together removes a lot of the ‘I am too stupid to understand’ attitude when participants see that others, apparently rather intelligent human beings, struggle just as much as they do. -

Set up a few boobie-traps

A lot of courses and tutorials are build in such a way that the student can smoothly sail through the tasks just by following the instructions. Yet as anyone who has burst out laughing at the Onion article “Computer Science to be renamed googling stack overflow” knows, this is not a realistic experience. Thus we have intentionally built in errors in the tutorials to force the students to learn how and where to seek help when problems arise. Many of them later remarked that experience of having solved the issues made them feel ready for the challenges of coding.

The workshop took place as a satellite to the annual Conference in Computer Applications and Quantitative Methods and was led by Dr Iza Romanowska and Dr Stefani Crabtree together with Dr Benjamin Davies and Dr Colin Wren. For more information about simulation in archaeology including future events, visit the Simulating Complexity website.

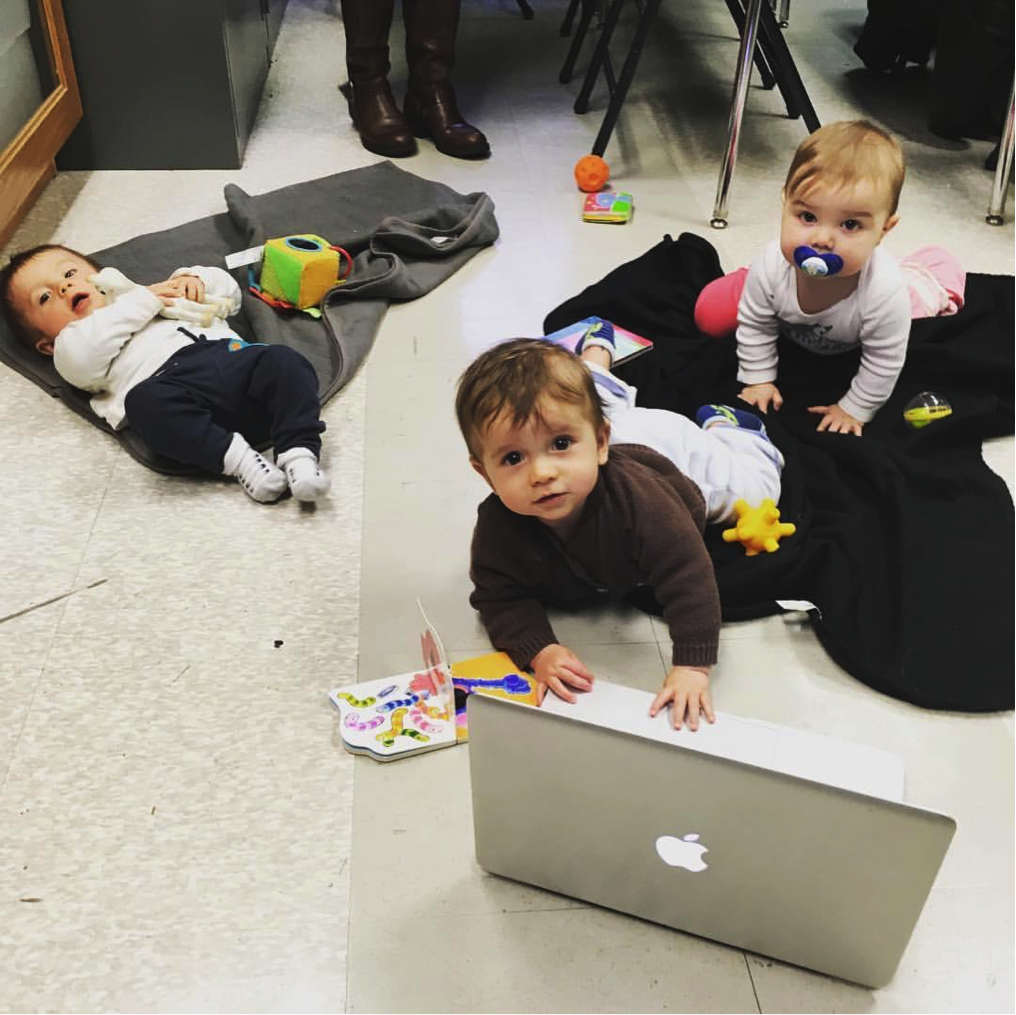

You are never too young or too old to learn new skills. (Photo taken at the workshop.)

You are never too young or too old to learn new skills. (Photo taken at the workshop.)