Docker Workshop 2017

Posted on 31 July 2017

Docker Workshop 2017

By Raniere Silva, Community Officer, Software Sustainability Institute.

By Raniere Silva, Community Officer, Software Sustainability Institute.

Last year, during the First Conference of Research Software Engineers, Iain Emsley, Robert Haines and Caroline Jay hit on the idea to organise a meeting about Docker and how researchers are using it. Ten months later, 60 researchers, developers and librarians met in Cambridge for the Docker Containers for Reproducible Research Workshop (C4RR).

The workshop consisted of one sponsored keynote by Microsoft, 20 talks and four lightning talks and participate in one of two demo sessions. There were many success stories involving containers and, when high performance computing (HPC) was involved, the use of Singularity as a good alternative to Docker.

Introduction

If I had to select one talk from C4RR to summarise the workshop, my choice would be Building moving castles: Scaling our analyses from laptops to supercomputers by Matthew Hartley, et al. With some images from Hayao Miyazaki’s Howl's Moving Castle, Matthew talked about the challenge to perform (wheat field) data analysis in different computational environments—Windows, MacOS, Linux, HPC, Amazon Web Services (AWS), Microsoft Azure, and others. Solving the dependency (hell) graph in all computational environments is almost impossible and extremely time consuming, since each environment will need its own recipe. Containers are helping Matthew's team at the John Innes Centre to explore their wheat data by allowing all researchers to use the same computational stack, independently of the machine used.

High Performance Computing

C4RR attendees heard from all speakers talking about HPC that system administrators will not let you use Docker on their machines because, at the moment, the Docker daemon requires root privileges. Matthew Hartley told us that "the infrastructure angels brought us Singularity" to solve the daemon requirement of root privileges.

Michael Bauer, one of the developers of Singularity, presented a talk providing more under-the-hood details of Singularity and highlight to the audience that Singularity is compatible with any Docker-based workflow they currently use.

Today, HPC machines not only have multi-core CPUs but also have GPUs with hundreds, if not thousands, of cores. GPUs are faster for certain applications and, because of this, many researchers are looking to run their code with CUDA, "a parallel computing platform and application programming interface (API) model created by Nvidia" as explained on CUDA website. Paul K. Gerke talked about his experience using nvidia-docker, a "plugin" to help users work around the limitation of specific drivers required by Nvidia hardware to execute CUDA code. Paul told us that nvidia-docker works but could have a better handler for when one of the machines encounters an error.

Reproducibility

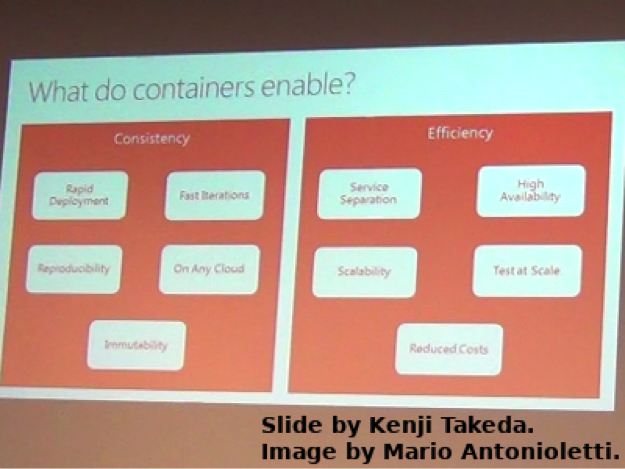

As highlighted by Kenji Takeda, our C4RR keynote, there is a reproducible crisis. Many are fighting to solve this crisis, e.g. Lorena Barbara, and containers can be a powerful tool in this battle, since they enable consistency and efficiency; see Kenji's slide on this point reproduced below.

Rawaa Qasha brought a discussion about reproducible workflows to the workshop. Researchers not only need their computational environment to be reproducible but also need the workflow they use to be reproducible too. One of the challenges of reproducing a workflow is that often there are insufficient details of the workflow description, insufficient description of the execution environment, lack of a reproducible execution environment and missing input data. Containers can help with the execution environment but not the others.

Two other interesting talks related to reproducibility were “Reproducible Analysis for Government” by Matthew Upson and “Using Docker and Knitr to create reproducible and extensible publications” by David Mawdsley et al. Matthew talked about the challenge to provide analyses of data collected by the government that will be used to guide public policy. Many steps of the analysis are copy and paste—not reproducible. David showed how he and his colleagues at the University of Manchester used Docker to reproduce their publication that was written with Knitr, a tool for "dynamic report generation with R".

Sustainability

Containers look great for reproducibility, but are Docker and Singularity themselves sustainable? This was the question that James Mooney and David Gerrard, two librarians, brought to the workshop. Their talk covered many of the points that researchers ignore when trying to solve today's run time issue, but are essential if we want to reproduce someone's work in 5, 10, 20 or even 50 years time. Some of the questions were: would container tool X be available in 50 years? Would container tool X be retro-compatible with a 50 years old container image? Would container image repository X be online in 50 years? Would the documentation of software Y containerised in image X be accessible in 50 years time? History showed us that the answer to all of these questions is "no". Although James and David weren’t able to tell us which tool we all should be using to solve these problems, they did leave us with an important message: "think about preservation now and not later."

Conclusions

The workshop was a great place to get inspiration about containers and how apply them into your current project. Containers will continue to be a hot topic in the next few years and Singularity will become more popular among researchers that need to use their HPC centre. And for reproducibility, be careful to not get blinded either by Docker or Singularity; at present they only solve today's execution issue.

Thanks

We would like to thank our sponsors, Microsoft and Stencila, and our co-organiser, Cambridge Computational Biology Institute.

Video Recording

If you missed C4RR, most of the activities were recorded and made available online. Visit the workshop agenda for the link to the videos.