Sharing reproducible research – minimum requirements and desirable features

Posted on 22 May 2018

Sharing reproducible research – minimum requirements and desirable features

By M. H. Beals, Catherine Jones, Geraint Palmer, Mike Jackson, Henry Wilde, John Hammersley, Daniel Grose, Robin Long, Adrian-Tudor Panescu, Kirstie Whitaker

By M. H. Beals, Catherine Jones, Geraint Palmer, Mike Jackson, Henry Wilde, John Hammersley, Daniel Grose, Robin Long, Adrian-Tudor Panescu, Kirstie Whitaker

This post is part of the Collaborations Workshops 2018 speed blogging series.

What does reproducible mean? Who do we want to help and support by making our research reproducible? At what point does non-reproducible research become good enough (and carries on to the highest standards of reproducibility?)

In our discussions during the first speed blogging session at the Software Sustainability Institute’s Collaborations Workshop in Cardiff in March 2018, we brainstormed criteria for judging the quality of reproducible research. What emerged were two clear messages: 1) We all have our own overlapping definitions of the desirable features of reproducible research, and 2) there is no great benefit in rehashing old discussions.

In this blog post we outline 9 criteria that can be met by reproducible research. We believe that meeting as many of these as possible is moving in the right direction. Source code and data availability are often seen as important requirements, but documenting what code is trying to achieve, which other software libraries are required to run the code, the greater research ecosystem, what lessons were learned in the development of the research, and the persistent availability of the materials are all separable, and valuable, components of our movement towards easy-to-use reproducible research.

Incremental progress is still progress. As a group we are committed to supporting the baby steps that individual researchers and developers can take to make their work more reproducible. Therefore, we reject the question of minimum requirements. How long is a piece of string? It doesn’t matter so long as the string exists and is fit for that day’s particular task.

Source code

Source code comes in many different forms, depending on the language it’s written in and the preferences and background of the writer. At the most basic level, it is the uncompiled code that allows the program to be run. When we’re new to writing code, or have only written code for ourselves in the past, it may have been written solely for the purpose of solving the task at hand, rather than with thought to its readability or reusability in future. Only when we’ve experienced sharing code with others, collaborating with or contributing to a wider group, do the benefits and importance of proper structure and commenting become clear. Thus, defining what ‘source code’ is depends on the context of our individual experience. If we are new to sharing projects, simply providing a file or files with the uncompiled code—which, when compiled locally, produces the outputs we’ve used in our research—would be a first step in sharing the source code. If we are more experienced, the source code would not be considered complete if it didn’t contain a license declaration, proper commenting, appropriate definitions and dependencies, and a suitable readme file on how to get started - these, then, effectively become part of the definition of source code.

Documentation

Documentation comprises many levels with many different aims. It can document the analysis/algorithm we are implementing, how to use the code, or how to develop the code. These levels reflect what we wish to achieve with the code itself. If it is to be open, and allow people to understand what we did, we need documentation in the code. If we want people to be able to run it, then we need to document how to run our code. If we want people to expand it, then we need to document how to set up a development environment to be able to do so.

Source code documentation should always enhance the readability of the code. It should do this by, at a minimum, explaining what the code does; that is, “this code implements the blah algorithm/analysis described in paper y”, “or this function implements some well known formula”. This needs to be the starting point. From here it should help the reader understand how the source code implements this algorithm, “function spam calculates the blah coefficient” for example. This leaves the reader clear as to what each part or function of the code does.

For someone to be able to take our code as is, and re-run it, the next level of documentation is needed—how to use it. This, at a minimum, should inform people what language it is written in, how to build the software (if applicable), how to load a data set (if needed) and how to execute the code. Preferably this would be an index.md or README file which would be seen as soon as someone unzips a release bundle or, in the case of GitHub, BitBucket or GitLab, clones the repository or views it in a web browser. The desirable level is then to provide the user with more detailed instructions. Here, we need to assume they have never used the language our code is written in or its build tools (if applicable)—we should add a link to a page explaining how to install the language and build tools, explain how to build or load our code or package (i.e. import blah.spam), how to load the data and run it, and how to change inputs or variables.

The final type is developer level. If we want someone to improve our code then we need developer documentation to help. Here, we need to, at least, describe how to set up a development environment—what tools a developer needs—and to document the inputs and outputs of our code, e.g. file formats and configuration parameters. Support is further achieved by explaining what each file controls, where to add new features, and what the structure of the code is. With this, people will know where to begin when adding or improving functionality.

Executables

Executables (i.e. compiled source code) can help reduce the time required for replicating research results. These need to include information on the platform for which they were produced, both at the hardware level (e.g. 32-bit or 64-bit processor architecture) and the software one (e.g. which operating system does the executable target). Moreover, they must be accompanied by information on the various runtime parameters they expect, such as the location of datasets being processed.

In order to facilitate reproducibility, it is preferable for executables to be distributed alongside source code, as, in their standalone form, they might obscure certain details regarding the workflow or methods, and might also be short-lived (e.g. the platform for which they were produced is no longer available). In order to improve their value especially in regards to preservation, executables could be included in packages implementing the whole context in which they should be used, such as so-called containerization solutions.

Dependencies

All software has a number of implicit and explicit dependencies to enable it to be built and run. A bash script needs the Linux operating system or a compatible execution environment; a C program requires a C compiler; and, a Python program may require the NumPy mathematical programming library. In many cases, specific versions of these dependencies may be required as languages, interpreters, compilers and libraries evolve through time: commands, instructions, constants, functions, and input and output formats can be added to or deprecated and removed from newer versions. Software might not build or run under either older versions or newer versions. For example, Python programs that run under Python 2 will not run under Python 3 if they contain the command “print ‘hello’”, as Python 3 requires this to be written as “print(‘hello’)”.

It is very valuable for reproducibility and reuse, and also for our future selves and colleagues in our research group, if we document in detail our software’s dependencies, the environment within which our software can be built and run. This includes: operating systems, languages, interpreters, compilers, third-party packages needed to build and run our software, standards and specifications (e.g. relating to input or output file formats) and databases, web services, or other online resources that our software might need.

Useful information about each dependency that can be documented includes: what the dependency is, its version number (or, if not available, the date you accessed that dependency), alternative versions (if, for example, you know that your program works with alternative versions), what the dependency is needed or used for, and, if applicable, the licence of the dependency (e.g. whether it is open source or proprietary).

Narratives of what the code is doing

When sharing research which we hope is reproducible, interpretability is key. Particularly when it comes to the source code. Without the ability to effectively read and understand the code in front of us, how could we hope to reproduce the research attached to it? Be that its inputs, outputs or construction.

Interpreting source code—be it our own or someone else’s—can be daunting, and measures on how to do that well are dependent on the context of the research at hand. Documentation is an essential part of any piece of code and it can be extremely powerful when done in an effective way, but it should not be the only part of our research which describes what we hope the code in front of us actually does. In an ideal world, other forms of ‘external’ documentation would be provided as part of the research project which detail what our scripts do. One of the most accessible, and effective, ways of doing this is pseudocode.

In the case where we have source code available (and usable) to us, pseudocode removes the need for any particular knowledge in a particular language or system on the part of the reader. If what we want to do is replicate results and build an understanding of how the author constructed their research but—like so many researchers out there—we are without the time to crawl through the source code, pseudocode can get us there. Similarly, if we want to reproduce the algorithm/analysis from scratch using different tools, languages, and environments, pseudocode can help us.

On the other hand, we may not have any useful source code at all for whatever reason: maybe it is closed-source or, in the case of outdated languages, it may not be compilable anymore. With pseudocode (or any other descriptive writing on top of the code itself), a reader can attempt to take what the original researcher aimed to do on a more conceptual level, and recreate that entire process in a more accurate way.

An example of pseudocode is as follows:

algorithm ford-fulkerson is

input: Graph G with flow capacity c,

source node s,

sink node t

output: Flow f such that f is maximal from s to t

(Note that f(u,v) is the flow from node u to node v, and c(u,v) is the flow capacity from node u to node v)

for each edge (u, v) in GE do

f(u, v) ← 0

f(v, u) ← 0

while there exists a path p from s to t in the residual network Gf do

let cf be the flow capacity of the residual network Gf

cf(p) ← min{cf(u, v) | (u, v) in p}

for each edge (u, v) in p do

f(u, v) ← f(u, v) + cf(p)

f(v, u) ← −f(u, v)

return f

The style, vocabulary, notation and visualisations used should be chosen so as to closely model those commonly used in the field of investigation.

Being able to break down a piece of code into deliberately human-readable parts, and providing those to the reader, makes the piece of work that code supports something more powerful.

Narratives of our whole workflows

A piece of software, however well designed and documented, is only one part of a larger research workflow. To understand its design and function—as well as its underlying assumptions and eccentricities—requires at least a broad overview of the ecosystem in which it resides. Does it assume inputs from a particular version of proprietary software? Does it require user intervention during processing and what guidelines restrict or frame the user’s behaviour or choices in these instances. Are the final results cleaned or fed into subsequent processing stages? Those wishing to facilitate reproducible research should, at a minimum, provide readers a narrative description of where this piece of software fits in the larger analytical framework, which steps proceed and follow it, and the extent to which the outputs can or should vary owing to user intervention during its use. This narrative can be augmented by the use of a workflow file, using languages such as YWAL or WDL, which will formalise workflow steps, aid both an understanding of the logic behind its creation and use and its redeployment in a similar or modified workflow by other researchers.

Input and output data

A description of the input and output data of a particular computational process. In general, output data is the fruit of the research, and is usually reported in the research output itself (e.g. journal papers or reports). These are our p-values, simulation results, and fancy data visualisations. Here we ask if our input data is also available, and does it clearly correspond to the outputs of the research. What does “available” mean? In the paper text itself or archived publicly online is great. A note saying that we are willing to email CSV files if requested is not so great (email addresses can change, promises can be broken). Of course, if the data is sensitive or private then public availability may be inappropriate; however, descriptions of the input data may be a small step in the right direction. A useful guide to follow is the FAIR principles, ensuring input data is Findable, Accessible, Interoperable and Reusable.

A lack of available input data immediately inhibits rerunning the process exactly; that is, running the same process with the same data. A lack of description of the data may even, in the worst case, render our descriptions of the processes and methodologies meaningless.

Licence

We might release the source code of your software, hoping that others will maintain and extend it, but, unless there is an accompanying licence explicitly stating they have the right to do so, chances are they won’t.

A software licence is a legal document that tells others what they can, and cannot, do with our software and any obligations upon them. This can include what they can, or cannot, use the software for; whether or not they can modify or extend it; and whether or not they can redistribute the software or their modified versions to others.

It is recommended that any software we release, or share, include a software licence. For research, we should consider an open source licence, so others can, at least, view our source code and so understand how our research was implemented in practice. See the Software Sustainability Institute’s guide on Choosing an open source licence.

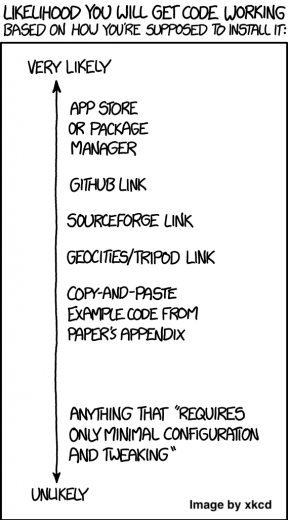

Deposit and publish for expected readers

In order to to share our reproducible research, it needs to be somewhere where our audience is able to locate and access it. First, it is important to be clear as to where our intended audience will look for the reproducible research; being part of a wider collection of material enhances the benefits of publication, namely traffic, as demonstrated by domain-specific journals. The most appropriate place of deposit may be an internal system that is not formally published, an institutional repository, a source code hosting service such as GitHub or BitBucket, a digital repository such as Zenodo or Figshare, or a specific repository requested by a journal publisher, such as those required by Nature.

Of course, simply depositing our research is not enough; we need to consider the metadata used to describe our research. This metadata will be used by others to locate the material and needs to address the potential retrieval terms to maximise the potential for sharing in future.

Consideration should also be made of the long-term value of the material—digital objects need active management to preserve long-term value. This active management includes ensuring the objects can be opened and run and establishing who is responsible for the intellectual content. This is of particular relevance if the material is to remain solely within an organisation, as it is more likely to receive benign neglect here than in an externally managed service. That this long-term management is likely to be done by people beyond the original project team and this makes the foregoing advice even more important.

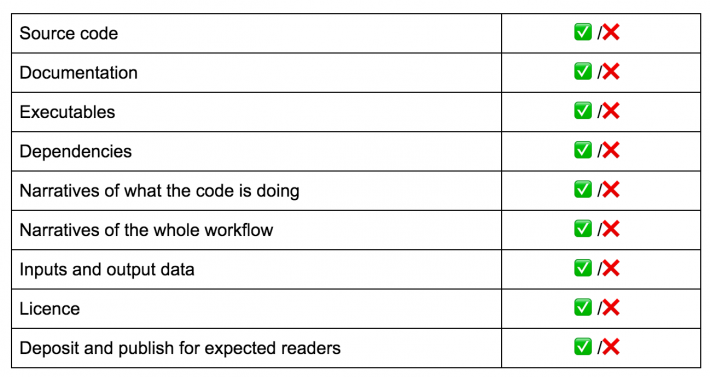

Towards a criteria-based summary of research software

The foregoing nie criteria can serve as a checklist for when we are preparing to share our research, embodied as software and data. However, they can also serve as a summary for others, based on the presence or absence of the resources associated with each criterion. For example:

Future iterations could provide a finer-grained description as to the quality or completeness with which each criterion is satisfied.

Such a summary provides a means to allow other researchers to determine whether our software, as held within source code hosting services or institutional or third-party digital repositories, provides the resources they require for their specific goals. For example, if a researcher wants to rerun some software or to validate some results, they’d likely want source code, executables, dependencies, documentation, and input and output data. If they want to extend and reuse it, they’d also want a licence. If they want to understand how the research was implemented in-code, they might only be interested in the source code and narratives of what the code is doing.