Sharing code – what's the point?

Posted on 11 October 2018

Sharing code – what's the point?

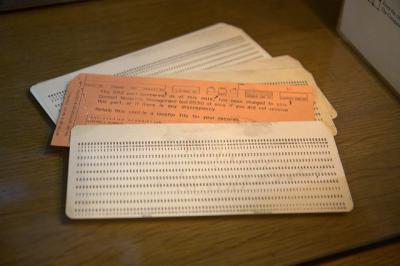

Image courtesy of Marcin Wichary.

Image courtesy of Marcin Wichary.By Danny Wong, NIAA-HSRC & UCL-DAHR.

Note: This is a slightly edited version of the original blog post at Danny Wong's website.

I’ve recently had the great fortune of publishing a paper which had significant interest from the general news media. It even managed to get picked up by the BBC, The Guardian and all the major newspapers in the UK!

As per usual, I’ve shared the source code for the analysis publicly, this time electing to serve it up on GitHub as a repository. I have included the manuscript as an .Rmd file, and the wrangling data wrangling and modelling code as a chunk located at the start of the .Rmd file. The knitted .html version of the manuscript output has also been included to allow people visualise what would happen if they knitted the document in R, IF they had the raw data at hand.

This brings me to the two points of my post. Firstly, I cannot share the raw data in public, because it contains too much sensitive personal information and individual hospitals in the UK who contributed data. There could be unscrupulous individuals who might be able to identify patients within the data and link it to other bits of publicly available data, which is a big no-no. Secondly, even as I share this code, people within my field of research don’t have the necessary skills to do anything with it.

Currently in the clinical research world, we are facing a big headache. On the one hand, we want transparency in research in order to tackle the problems of unreproducible research –much commentary has been written about the reproducibility crisis affecting science. The assertion is that we want to be able to understand how research teams arrive at their findings, and showing the working behind the statistical analyses in order to ensure that findings are real and replicable, and not merely spurious. On the other hand, we need to ensure that confidentiality of individual patients is maintained, especially in the case of large epidemiological studies where patients may not necessarily have given their consent to share their data.

Even if the data was shared alongside my code, there may not be enough people out there who could read or understand the code. In Health Services Research (my area of clinical research), there have been some studies into which software packages are most widely used. In one study looking at the US literature, “Stata and SAS were overwhelmingly the most commonly used software applications employed (in 46.0% and 42.6% of articles respectively).” And while the popularity of R is rising, very few consumers of research (as opposed to producers of research) would ever know how to code in R. This means that the code that is shared would barely ever be read by anybody.

So is there any point in me sharing my code? I guess my answer would have to be principled if not pragmatic. I hope that by sharing my code, someone who comes to repeat my studies in the future can do so without having to reinvent the wheel again from scratch, and perhaps that someone can take my work and build upon it. Also it is an opportunity for a hypothetical future person to look at and offer up suggestions to my code to help me improve, or to serve as a teaching point. There may be a future world where lots more researchers would switch to using R or where more consumers of research would become comfortable with reading code. Indeed we are encouraging that future by teaching as many people as possible the basics of R and reproducible research.

Successful first day of our Data Science for Doctors course September 2018 edition. 20 more people who have been introduced to the world of #rstats, to go forth into the #NHS and academic world! @datascibc

— Danny Wong 黄永年 (@dannyjnwong) September 27, 2018Day 2 of our Data Science for Doctors course at @RCoANews! The students are learning to visualise data! pic.twitter.com/yN72L8aqJU

— Danny Wong 黄永年 (@dannyjnwong) September 28, 2018However, there remains a lot of work to do in the area of improving research reproducibility. We need to come up with some solutions to at least share simulated toy data examples in order for third parties to run the code in order to fully appreciate it in its entirety. Just looking at the code and working it out in our heads doesn’t allow the code to be fully dissected independently. We also need to encourage journals to recruit editorial board members who have some coding ability, and encourage keen coders to provide code reviews for submitted journal papers. Until that happens, we should encourage people to post their code online and reward authors for doing so by pushing them to cite their code as evidence of scientific output.