How do you help build intermediate software engineering skills and help people go beyond the basics?

How do you help build intermediate software engineering skills and help people go beyond the basics?

Posted on 9 August 2021

How do you help build intermediate software engineering skills and help people go beyond the basics?

By Sam Mangham, Alison Clarke, Iain Barrass, Paddy McCann, Adrian D’Alessandro and Bailey Harrington.

By Sam Mangham, Alison Clarke, Iain Barrass, Paddy McCann, Adrian D’Alessandro and Bailey Harrington.

This blog post is part of our Collaborations Workshop 2021 speed blog series.

The SSI and many institutions offer researchers training in the basics of programming and data analysis, but what’s the best way to build on those skills to enable people to build better, more sustainable software?

The Carpentries are increasingly well-established as a method for delivering training in basic computing skills to researchers, but helping people to move beyond that point presents significant challenges. Although feedback from Carpentries learners is often very positive immediately following a workshop, it is frequently unclear how the skills and knowledge gained are retained and applied in the following weeks, months and years.

Identifying and reaching an audience for intermediate software skills training can be difficult. Someone’s assessment of their capabilities may be flawed - in particular their awareness of gaps in their knowledge. They may be very capable in one area, but a relative novice in another - or they may think they are more capable than they are.

As researchers advance, their needs will typically become more and more specialised, though commonalities will exist across problem domains. Does the use of “toy” problems, as in Carpentries courses, diminish in value? Is there a need to be more explicit and specific about how researchers can apply technologies and techniques to their own work?

Given these challenges, what approaches are most appropriate for building intermediate software engineering skills? What training should be delivered, how should it be delivered, and what other support should be offered to researchers to allow them to develop their skills?

SSI Intermediate course exists now

The Software Sustainability Institute recently piloted a new course which aims to teach “a core set of established best practice software development skills” including software design, coding conventions, working collaboratively, and using continuous integration. This looks to be a really valuable course, and is available as a Carpentries-style course. However, practice with real-world problems and ongoing reinforcement is needed to master these skills.

Following-up from intermediate training remains just as important an aspect as for introductory training. Although more techniques may fit the research work of the students, these skills atrophy without being refreshed. Even careful syllabus design won’t, and shouldn’t, capture precisely those skills the researcher will need in day-to-day software development. Reinforcement of the learning can be informed by a post-learning survey which is carried out several weeks or months after the training course has been attended. Opportunities for use of the skills beyond the student’s “normal” work should be encouraged.

The philosophy of the Carpentries includes a “graduation” of moving towards helping and instructing at future courses and this, or some other form of knowledge transfer, should be encouraged even in other Carpentry-style courses or learning methods.

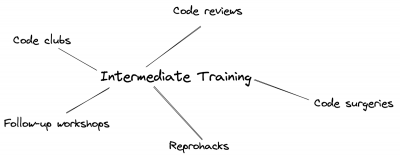

Other ancillary activities beyond “the day job” should be suggested. We identify below some such events where interaction with other students increases exposure to the learned material. Contact with, and ongoing support from, instructors should be maintained where possible and appropriate.

No one-size-fits-all answer

Whilst the Intermediate Software Carpentry material is a great way to build a broad range of skills, it’s not going to be a one-size-fits-all answer. Different domains may need different skills or techniques: for example, users in Physics may need to focus on Python-based command line solutions, very different from R via RStudio appropriate for Bioinformatics users.

For beginner material it’s easy to assume a common baseline of knowledge. For intermediate work that builds off a broader set of skills, it is harder to ensure that course attendees have the necessary prerequisite knowledge, as a lack of experience may make it difficult for them to judge their current level. Additionally, as courses get more complex, it is desirable to give attendees a more concrete understanding of why the intermediate material is useful, but examples that show the usefulness may need to be tailored to different domains.

For maximal impact of Intermediate training we need a range of approaches: lift researchers up to the level needed to enter the course, adjust our delivery of the material to ensure long enough gaps for skills to be absorbed, and provide ongoing support to help them understand and implement the lessons in their day-to-day work.

Range of solutions

As there’s no one solution, we’ve identified a number of ways to introduce intermediate topics to learners, and how progress can be developed incrementally.

Code clubs

With “code clubs”, including code review clubs, where research staff and students can bring and discuss their own code with colleagues, we see opportunities to reinforce techniques that were shown in taught sessions. Club members are also exposed to code beyond their own, so their thinking about particular aspects isn’t restricted to whatever suits their current work focus. Such discussions have the further advantage that when providing comments on another’s code, the thoughts needn’t be 'fully formed': “I think you can approach this problem by …” affords consideration of a topic without having to be confident in its complete application, and allows competing views to be sought.

Code clubs which review students’ own real-world code, in a non-threatening environment, can be an ideal way to repeat exposure to, and gradually increase, sustainable development techniques.

Reproducibility Hackathons

In a similar vein to code clubs, reproducibility hackathons (such as ReproHack and this) are another way to improve researchers’ computing skills. While reproducibility isn’t specifically an intermediate software engineering skill, it requires a number of non-basic skills. Reproducibility hackathons allow researchers to bring their own code to a group session with peers and RSEs where they can dedicate time to improving their code and coding skills. One positive is that the researchers who are motivated to make their computational research more reproducible are more likely to attend, so are often going to participate fully. That said, this method does not always bring in new people.

Code Surgeries

Providing continued support and learning, through bring-your-own data/code surgeries, following a taught course can be extremely beneficial for the attendees. It would also make it possible for instructors and others delivering such training to assess how helpful it has been in attendees’ typical work, and whether the topics covered should be modified to better meet the audience’s needs. However, this type of follow-up can be difficult to implement in a way that is both feasible for the instructors/helpers and that attendees find immediately useful.

There are several reasons this type of follow-up can be difficult to run successfully. Firstly it can be hard to define the scope of the surgery so that people bring along appropriate questions, which instructors have the experience to answer. Secondly, attendees may find it difficult to identify a suitably-small problem to be answered in a one-off surgery. Finally, attendees may fall victim to the XY problem, i.e. asking for help with Y when the real issue is X: instructors need experience to understand attendees' motivations for the questions they ask.

Final Thoughts

The new Intermediate Software Skills course created by the SSI should prove to be a great resource for teaching researchers the skills they need to create more sustainable software, but instructors should bear in mind the need to follow up to ensure that course participants are able to make the most of their newly learned skills when they get back to their day job.